-

Notifications

You must be signed in to change notification settings - Fork 0

Commit

This commit does not belong to any branch on this repository, and may belong to a fork outside of the repository.

- Loading branch information

Showing

2 changed files

with

18 additions

and

3 deletions.

There are no files selected for viewing

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -0,0 +1,14 @@ | ||

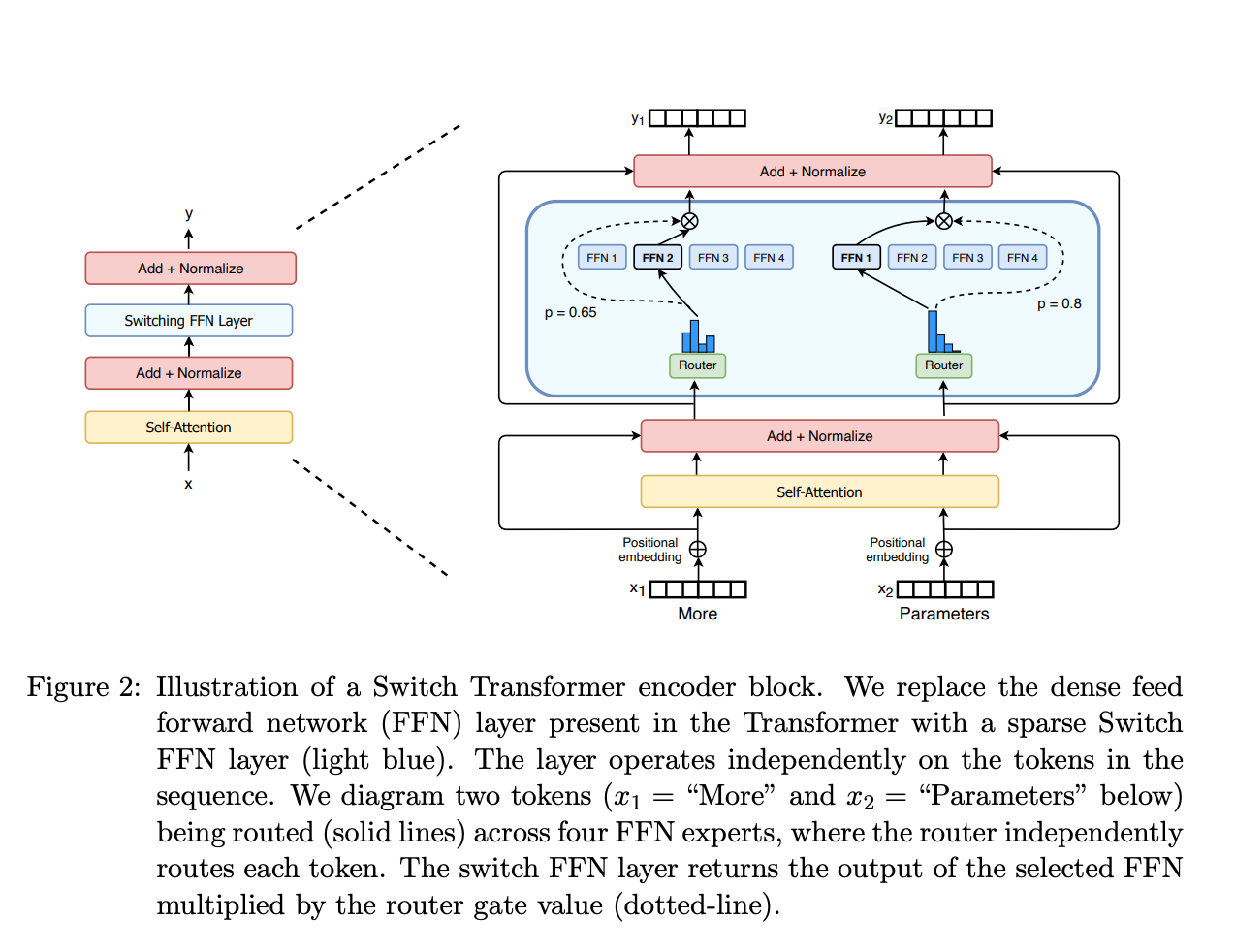

| # Mixture Of Experts (MoE) | ||

|

|

||

|  | ||

|

|

||

| Enhances performance by utilizing a set of specialized models, or "experts," for different tasks. This framework allows only a subset of experts to be activated for any given input, optimizing computational resources and improving efficiency. | ||

|

|

||

| > Note: my code is a simplified version, as it does not add/handle noise,randomness, etc. | ||

| Resources: | ||

| - https://huggingface.co/blog/moe | ||

| - https://cameronrwolfe.substack.com/p/conditional-computation-the-birth | ||

| - https://github.com/lucidrains/mixture-of-experts | ||

| - https://www.youtube.com/watch?v=0U_65fLoTq0 | ||

|

|